Whoa!

I remember the first time I watched a swap fail because of a stupid gas misestimate. It was ugly. My instinct said something felt off about the UI confirmations, and yeah—my wallet didn’t simulate the important bits. Initially I thought gas warnings were enough, but then realized that preflight simulation changes the whole risk equation for users interacting with composable DeFi primitives across chains.

Here’s the thing.

Transaction simulation isn’t just nice-to-have. It’s the difference between a recoverable mistake and a permanent loss. On one hand a simple gas bump will fix 90% of nonce issues. Though actually, when interacting with contracts that call other contracts, a failed on‑chain attempt can mean lost gas and cascading state changes elsewhere.

Seriously?

Yes. And this matters more on multiple chains where tooling quality varies and where explorers or RPC endpoints give inconsistent feedback.

Let me walk you through why simulation matters, what good simulation looks like, and where most wallets drop the ball. I’m biased toward tooling that shows you the guts before you sign. That preference shaped a lot of my early work in DeFi, and it still bugs me when a wallet hides complexity rather than exposing it clearly.

Why simulate at all?

Hmm…

Simulation gives you a prediction, not a promise. It lets you see whether a transaction will revert, whether a call will produce the expected token amounts, and whether any hooks (like fee-on-transfer tokens or callbacks) will run. It reduces surprise. My gut feels safer when I can preview the state changes a contract will attempt.

Practically speaking, a simulation can catch: reverted calls, out‑of‑gas scenarios, slippage beyond thresholds, approvals that are insufficient, and MEV‑style sandwich risk indicators when bundled with mempool monitoring.

Something felt off about the phrase “prediction” above, though—because simulation depends on the input state, node sync, and mempool differences.

Okay, so check this out—

RPC nodes can return different results for the same simulated call depending on their mempool state and they might lag the latest blocks. This means a simulation run against one archival node could differ from the live chain by a few blocks or missing pending transactions.

Initially I thought a single RPC check was fine, but then realized multi-source simulation is required if you want accuracy at scale.

That realization pushed teams to aggregate results from multiple nodes and providers, and to model mempool adversarially when users interact with sensitive positions.

What does a good multi‑chain simulation system do?

Really?

It does several things. First, it performs a dry‑run of the exact transaction data against a state snapshot and reports the result. Second, it models gas and base fee variability. Third, it simulates internal calls and logs, showing token transfers and approvals that might be abstracted away in standard UIs.

Long story short: you want to see the full call graph. Otherwise you’re signing in the dark.

On top of that, advanced systems estimate probable outcomes when state changes between simulation and broadcast are likely, and they warn you if slippage protection won’t save you from an atomic failure or frontrun. They also surface data about “what the contract may do”—like whether it can take custody of tokens, set approvals, or mint new tokens.

Here’s what I mean by “what the contract may do”: some contracts include callbacks that execute arbitrary code on transfer, and seeing that in a simulation can be the difference between harmless and catastrophic.

Common failure modes simulation should catch

Whoa!

Reverts on complex batched transactions. These are brutal because you might pay gas for each individual call before the final revert undoes expected outcomes. A good simulator marks where reverts occur and why.

Front‑running and MEV exposure. Simulation paired with mempool analysis can flag when your swap is likely to be sandwiched or when a borrow might be liquidated mid‑execution.

Approval mistakes and allowance race conditions. Some dapps expect a particular allowance granularity and will fail if you only give partial approvals.

Failed cross‑contract interactions. For example, a DeFi strategy might call a lending pool that calls a yield manager; if any subcall fails, the top level can revert even if intermediate steps succeeded on-chain, which is maddening.

Also very important: token standards quirks. Fee‑on‑transfer tokens, rebasing tokens, or malicious tokens that misuse hooks should be visible in simulation traces so the user knows what will actually end up in their wallet.

Multi‑chain complexities

Hmm…

Every chain has its own timing, fee market, and node ecosystem. Optimism and Arbitrum have different sequencer behaviors. Polygon nodes might be faster but less consistent. Even EVM‑compatible chains diverge in gas token economics.

So you need per‑chain simulation models, not one-size-fits-all logic.

My first approach was naive: run the same simulator for all chains. That failed fast. Chains with fast finality can make mempool warnings less relevant, while others with slow propagation require much more conservative estimates and richer warnings.

Cross‑chain bridges make simulation even hairier because many bridging flows include off‑chain finalization steps and relayer behavior that can’t be perfectly simulated. For those, the best you can do is warn about trust assumptions and provide a probability of completion estimate.

I’ll be honest—some of this feels like art more than science. But we can improve the art by combining deterministic on‑chain dry‑runs with heuristics learned from historical TX patterns.

UX: how to show simulation to regular people

Here’s the thing.

Developers love call graphs and internal traces, but most users want “Will I lose money?” and “Can I recover it?” A good UI translates deep trace data into plain language without dumbing it down entirely.

For example: show the predicted token deltas, the exact approval scopes, and a red/yellow/green risk indicator backed by trace snippets. Let power users expand to see the full logs. Let novices see plain English summaries.

Okay, so a practical microflow is: when you click confirm, show a one‑line risk summary, a detailed trace beneath it, and a “why this matters” tooltip that links to more resources.

Wallets that integrate simulation as a core UX element—rather than as an optional developer tool—deliver fewer support tickets and fewer “I lost my funds” threads on Discord. I say that from direct field ops and from watching support queues light up less when users had preflight warnings.

I’m not 100% certain every user reads warnings, though. Human behavior is stubbornly inconsistent, and some will click through anything if they want the yield.

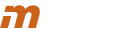

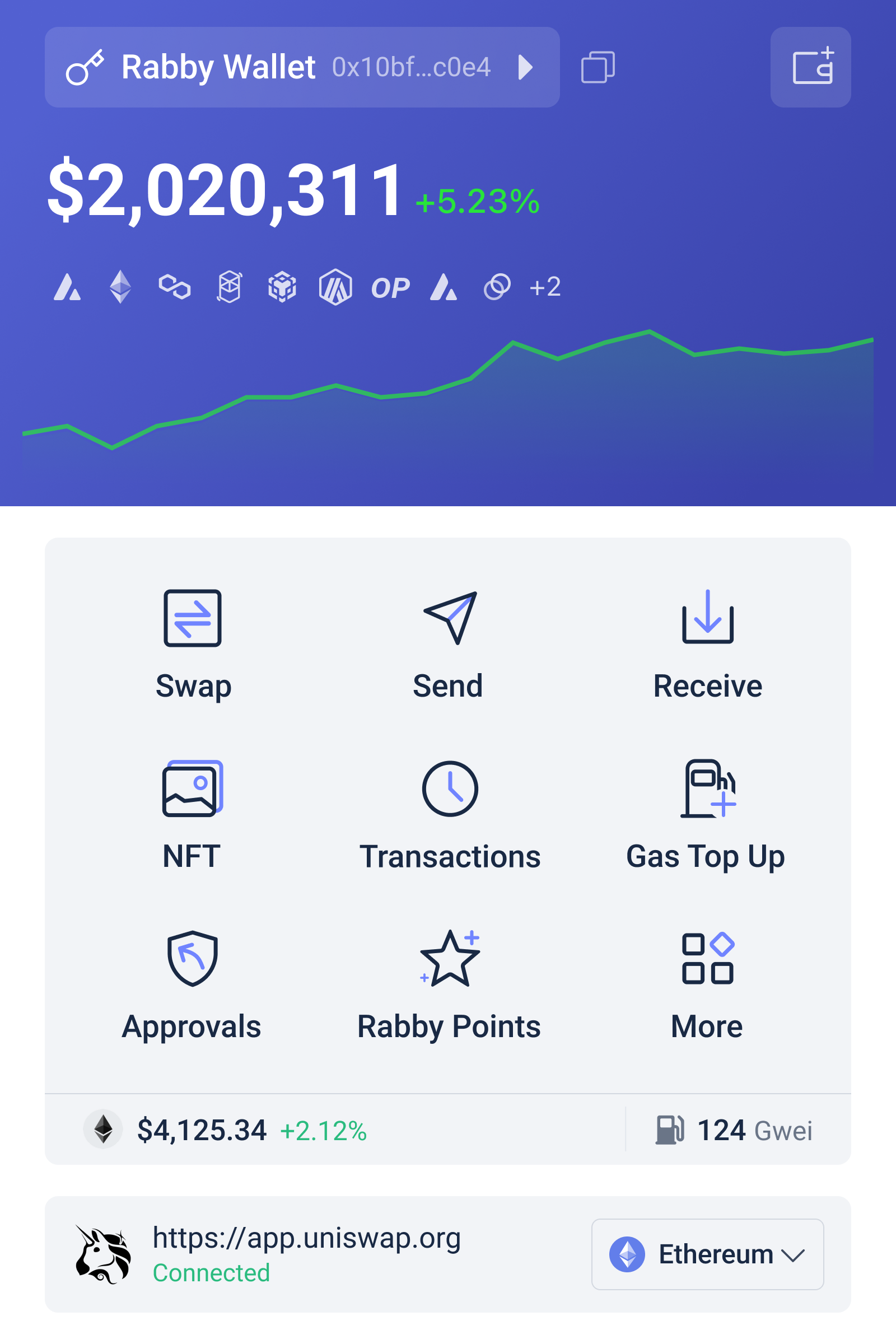

Why Rabby and similar wallets matter

Seriously?

Tools that prioritize transaction simulation, mempool awareness, and clear UX signals are gaining real trust with DeFi users. A wallet that surfaces preflight simulations for swaps, contract interactions, and batched operations helps users make informed decisions—fast.

I’ve been testing several wallets and the ones that bake simulation into the confirm flow reduce accidental approvals and token losses noticeably.

If you’re exploring wallets that emphasize these features, check out rabby—they’re one of the projects pushing transaction simulation and visibility into the foreground instead of burying it.

That said, no wallet is a silver bullet. Simulation can be wrong if RPCs are out-of‑sync, if signed data is tampered with by malicious dapps, or if off‑chain actors interfere with expected flows. The best approach is layered: simulation plus hardware signing plus clear provenance of the dapp interaction.

Developer tips: build better simulations

Whoa!

Record the full call trace during dry‑runs, including internal transfers and emitted events. Replay with different base fee scenarios to estimate gas risk. Aggregate node responses from multiple providers to detect inconsistent mempool states.

Also, maintain a signature database to map function selectors to human‑readable methods, and map those to known risk patterns (e.g., “setApprovalForAll”, “transferAndCall”).

And please: simulate with the exact calldata and value you intend to broadcast. Off‑by‑one errors in calldata can produce wildly different results.

Finally, expose machine‑readable warnings for integrators so dapps can show congruent messaging, rather than conflicting instructions that confuse users when a wallet says one thing and the dapp another.

FAQ

Can simulation guarantee a transaction will succeed?

No. Simulation reduces uncertainty but cannot guarantee success because the on‑chain state and mempool can change between simulation and broadcast. However, simulation catches deterministic issues like reverts and insufficient approvals, and it flags probabilistic risks like MEV exposure.

Does simulating require extra permissions or expose private keys?

No. Simulation uses the unsigned transaction data and a node’s eth_call or equivalent. It does not require private keys. That said, some advanced simulations may send enriched context to third‑party providers, so pick providers with strong privacy policies if that concerns you.

How should I use simulation as a regular DeFi user?

Use simulation to check for reverts, unexpected approvals, and slippage beyond your threshold. Pay attention to red warnings and expand trace details when available. For high‑value or complex flows, pause and cross‑verify the simulation across another RPC or a trusted tool before signing.

In the end I came back to a personal credo: show the user everything you can without overwhelming them. Let the curious drill down. Let the cautious get clear yes/no guidance. Let the power user see the raw trace. This layered transparency is what will make multi‑chain DeFi safer for everyone.

I’m biased, but if wallets continue to hide complexity we won’t tame smart‑contract risk. The next era of tooling needs to be honest and visible. Somethin’ tells me the winners will be those that simulate first, educate second, and ship safeguards third…